Business Email Compromise (BEC) remains one of the most financially devastating cyber threats facing organizations today. Unlike traditional phishing that relies on mass spam, BEC attackers conduct targeted reconnaissance to impersonate trusted insiders, typically C-level executives, finance professionals, or system administrators, and trick victims into wiring funds, sharing credentials, or approving fraudulent transactions.

What’s new in 2025–2026? Artificial intelligence (AI) has supercharged the reconnaissance phase, turning what once took days or weeks into a matter of hours. Attackers now use generative AI to scrape public data, build detailed victim profiles, and craft emails that are nearly indistinguishable from legitimate business correspondence. This results in faster, more convincing attacks that bypass traditional filters and exploit human trust at scale.

In this blog, we'll examine exactly how attackers leverage AI to accelerate every stage of BEC campaigns, identify the specific high-risk roles they target most aggressively, review the latest statistics and explore how Human Risk Intelligence can help security leaders move from reactive training to proactive, data-driven defense.

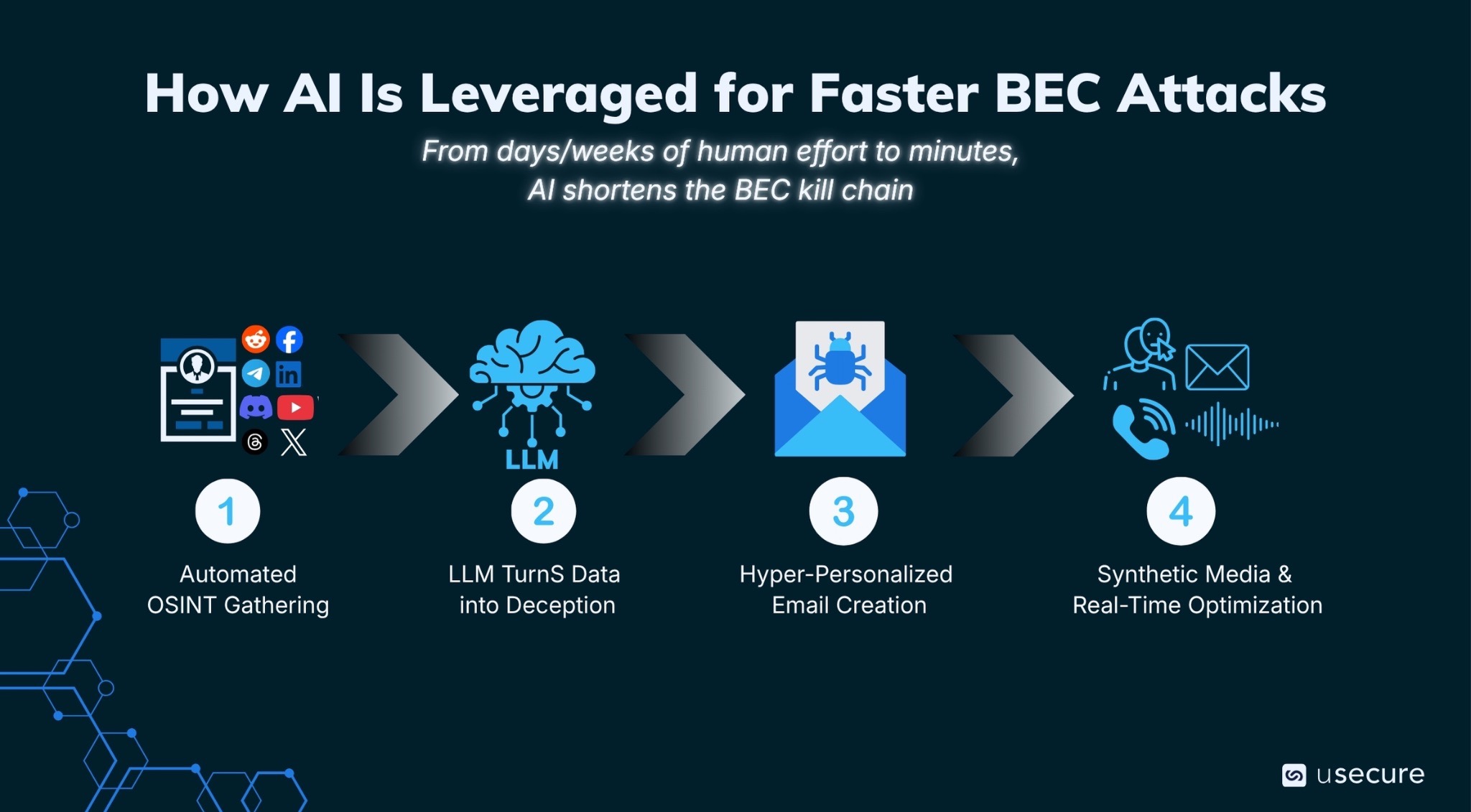

How AI Is Leveraged for Faster BEC Attacks

Attackers have dramatically shortened the kill chain for BEC by weaponizing AI at every stage of reconnaissance and execution. What used to be a slow, labor-intensive process requiring days or weeks of human effort is now completed in minutes; this has made the attacks more scalable and alarmingly effective. Below is how the modern AI-driven BEC attack unfolds step by step.

1. Automated OSINT Gathering at Scale

Modern BEC campaigns begin with automated open-source intelligence (OSINT) gathering. AI tools scan LinkedIn profiles, company websites, social media posts, and even seemingly harmless family vacation photos or personal updates. This process maps out entire organizational hierarchies, identifies key decision-makers in finance and administration, uncovers travel schedules, ongoing projects, vendor relationships, and personal details that create believable urgency or rapport.

2. Large Language Models Turn Data into Deception

AI systems aggregate scattered public data into comprehensive target profiles, complete with writing style analysis, speech patterns from public recordings, and contextual insights such as recent board meetings, quarter-end pressures, or internal system migrations. This rich intelligence then feeds directly into large language models that transform raw data into highly convincing attack material.

3. Hyper-Personalized Email Creation

These models produce polished, context-aware emails that mimic the exact tone, jargon, and signature style of the impersonated executive or colleague. The messages include accurate references to real projects, colleagues, or recent events. They incorporate subtle urgency pretexts that feel authentic, such as “urgent wire transfer needed before quarter close to lock in the vendor discount we discussed last week” or “vendor invoice update required due to the new ERP system migration we announced in the all-hands meeting.”

4. Synthetic Media and Real-Time Optimization

Beyond text, attackers increasingly combine this with synthetic media. They generate deepfake voice samples from earnings calls or conference appearances for follow-up phone calls, or create realistic email threads that appear to continue an existing internal conversation. Some campaigns even test multiple variants of subject lines and phrasing in real time to optimize open and click rates. As a result, even lower-skilled threat actors can launch what previously required advanced social engineering expertise.

This acceleration is dramatic. What used to take 16 hours or more for reconnaissance and crafting can now occur in as little as 5 minutes, enabling attackers to strike at the optimal moment when targets are distracted or under pressure. The outcome is a new generation of BEC attacks that bypass traditional email filters more effectively and exploit human psychology with frightening precision.

By mid-2024, an estimated 40% of BEC emails were already AI-generated, and that proportion has only grown. Attackers no longer need native-level English or deep research skills because AI can now handle the heavy lifting. That means today even low-sophistication threat actors are able to launch enterprise-grade campaigns.

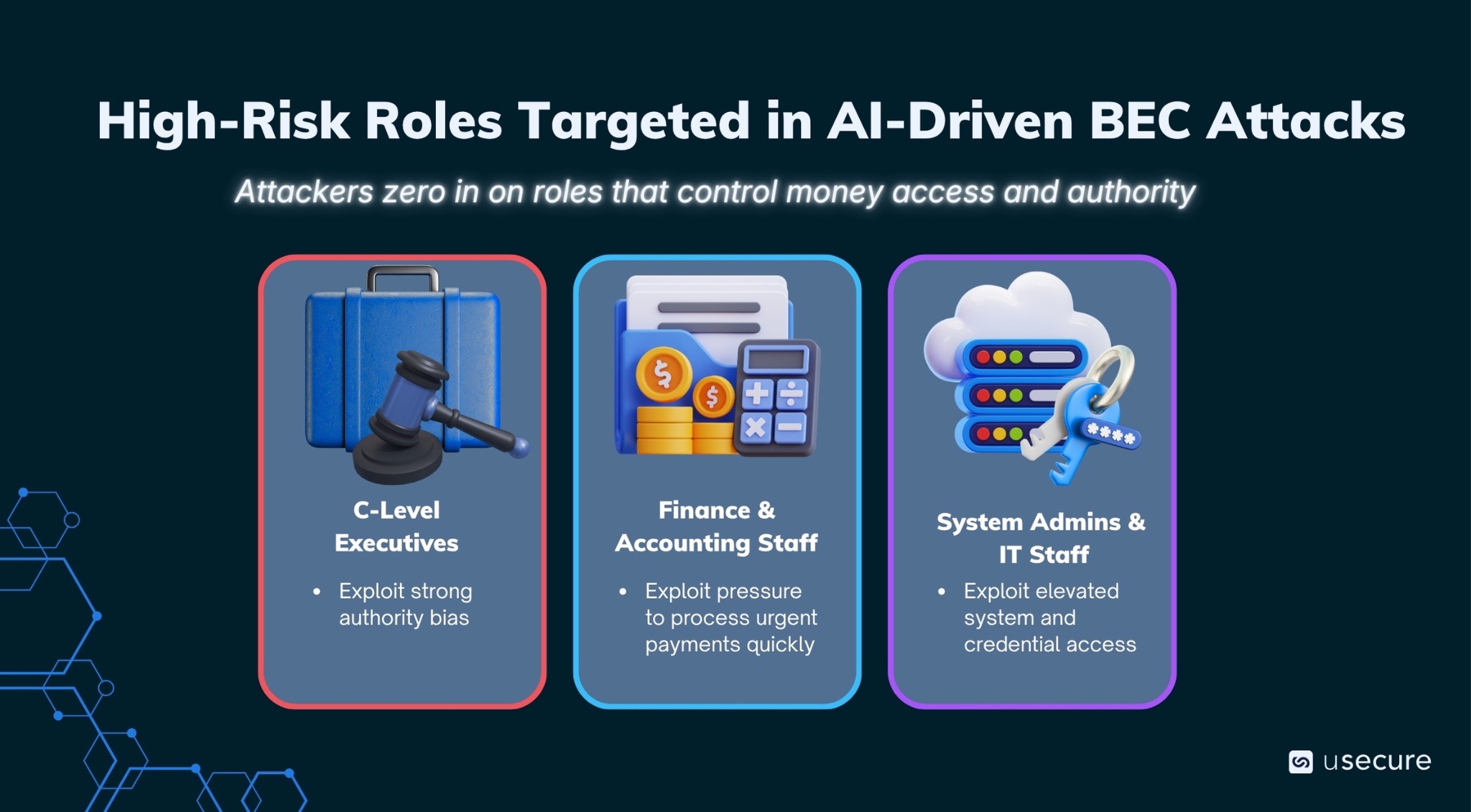

High-Risk Roles Targeted in AI-Driven BEC Attacks

Attackers do not cast a wide net with generic phishing blasts. They zero in on specific roles that control money, access, or decision-making authority because these positions deliver the highest and fastest return on investment. AI supercharges this precision by enabling rapid profiling and hyper-personalized attacks that feel entirely legitimate. The following three groups are most frequently targeted:

- C-Level executives are impersonated in the vast majority of BEC attacks (around 89 percent) because of strong authority bias. Employees are conditioned to comply quickly when a request appears to come from the top. A single convincing email can trigger massive wire transfers without multiple approvals.

- Finance and accounting staff process payments daily, handle invoices, manage vendor relationships, and execute payroll or treasury functions. Attackers tend to impersonate vendors (e.g. "update our banking details for the upcoming payment") or internal personnel (e.g. "approve this urgent transfer before quarter close"). Because finance teams operate under tight deadlines and high volume, they are particularly vulnerable to pressure tactics.

- System administrators and IT staff hold elevated system access, manage credentials, and can be leveraged for "internal IT support" pretexts that open backdoors for deeper compromise. An admin might receive a request to "reset credentials for the new CFO" or urgently patch a "vendor system integration issue." Once inside, attackers can pivot to financial systems or plant persistence mechanisms.

The Devastating Real-World Toll of BEC Attacks

The latest statistics paint a sobering picture of how quickly the BEC threat landscape is evolving.

- According to the FBI’s 2025 Internet Crime Complaint Center (IC3) report, total cybercrime losses reached a record $20.88 billion, with BEC alone responsible for over $3.046 billion, representing roughly 14.6% of all reported cyber losses. AI-driven scams contributed 22,364 complaints and approximately $893 million in documented losses. Businesses reported losses over 30 million to BEC scams involving AI.

- Beyond the raw dollar figures, the attack landscape has shifted dramatically in just a few years. BEC attacks rose 15% overall in 2025 compared to the previous year, according to LevelBlue SpiderLabs research, which observed an average of over 3,000 BEC messages intercepted daily.

- Osterman Research indicated that 60% of organizations (especially smaller ones) now encounter frequent BEC attempts, with AI contributing to the surge in volume and automation.

Human Risk Intelligence Turning Data into Defensible Action

Traditional security awareness training alone is no longer enough against AI-augmented threats that evolve daily. What’s needed now is Human Risk Intelligence (HRI).

HRI aggregates signals from employee behavior, identity access patterns, security hygiene, and real-time threat intelligence to create a continuous, actionable view of human-led risk. Instead of generic monthly training, HRI identifies which user has weak password habits, and which executive might be a prime deepfake target. By prioritizing high-risk individuals and delivering just-in-time, role-specific interventions, organizations can measurably reduce human risk.

Protecting Your Organization in the AI Era

It is clear that AI has not just made BEC attacks more convincing, it has made them faster and more scalable than ever. Organizations that continue relying solely on perimeter defenses or one-size-fits-all training will remain vulnerable. HRI combines data-driven insights with targeted education and real-time interventions. This proactive approach enables security leaders to stay ahead of the rapidly evolving reconnaissance curve.

The attackers are using AI today. The question is: will your defenses evolve just as quickly? Explore the demo hub to see our security awareness solution and the wider human risk suite in action.

Subscribe to newsletter

Discover how professional services firms reduce human risk with usecure

See how IT teams in professional services use usecure to protect sensitive client data, maintain compliance, and safeguard reputation — without disrupting billable work.

Related posts

Explore more insights, updates, and resources from usecure.

.png)