AI-Generated Malware & Scam Kits: Lowering the Barrier for Low-Skill Attackers

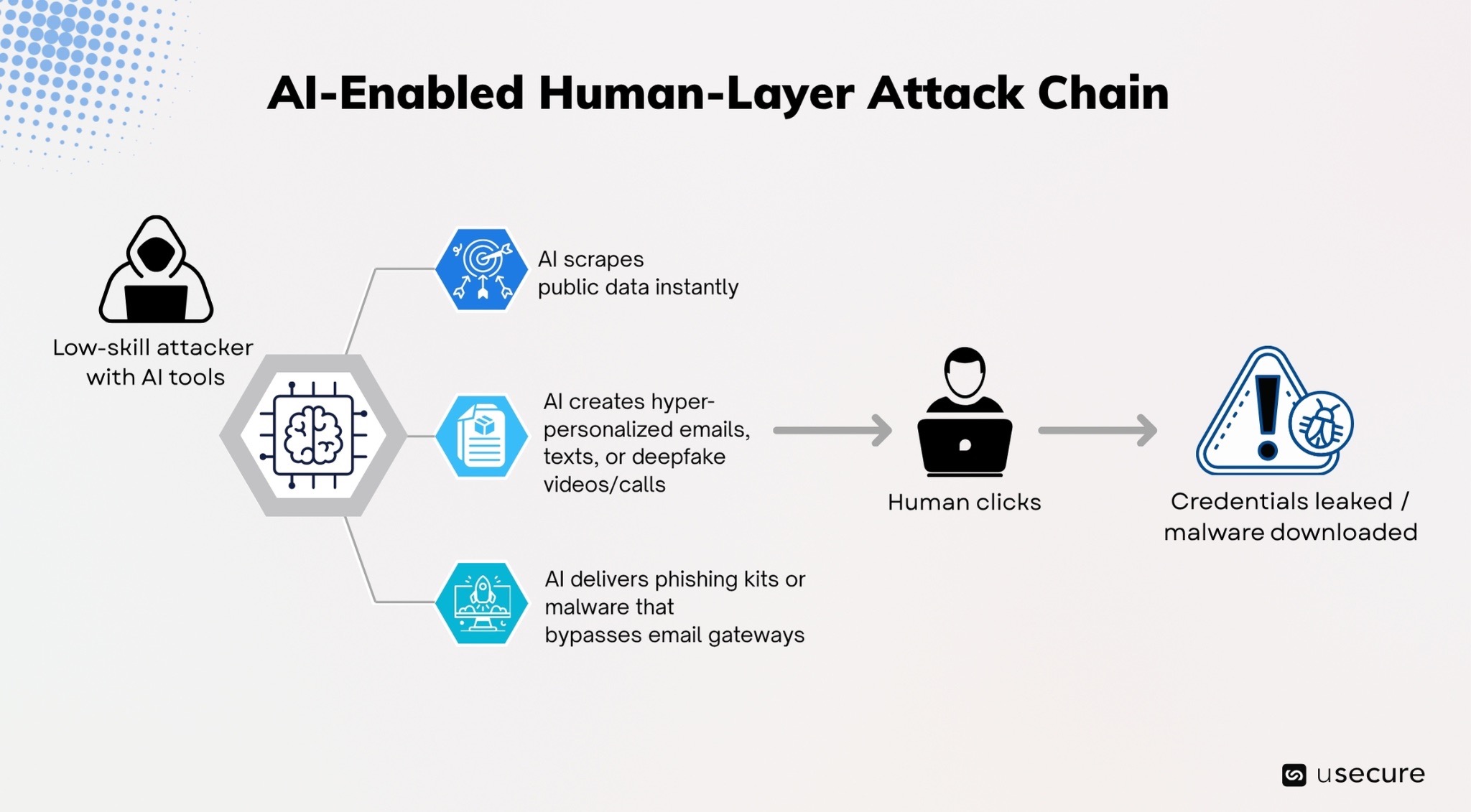

Cybercrime has crossed a dangerous threshold in recent years. Artificial intelligence didn’t just speed up attacks, it democratized them. What once required elite coding skills, expensive infrastructure, and months of planning can now be executed by anyone with computer access, a credit card, and a few prompts. AI-generated malware and scam kits have slashed the technical barrier to entry, flooding the threat landscape with low-skill attackers who punch far above their weight. The human layer is the primary target of AI-generated malware and scams. That means your employees, contractors, and end users remain the most exploited entry point.

In this blog, we’ll unpack how AI is supercharging low-skill threats, share the latest real-world examples and statistics, and show why only a proactive, intelligence-driven approach to the human layer can stop them.

How AI Is Lowering the Barrier for Low-Skill Attackers

Traditional cybercrime demanded expertise, but today malicious AI tools act like a force multiplier, turning low-skill attackers into highly effective threats capable of launching sophisticated, enterprise-grade campaigns at scale. The result is an explosion in both the volume and quality of attacks.

- Lightning-Fast Reconnaissance

- This capability of AI is the single biggest reason low-skill attackers can now run enterprise-grade campaigns. Attackers feed a simple prompt into an uncensored AI tool (WormGPT, FraudGPT, or variants built on Grok/Mixtral) along with a target list. The AI instantly scrapes or publicly available data from LinkedIn, company websites, recent press releases, or leaked credential dumps. In seconds it knows:

- Your exact job title and reporting structure

- The name of your current project or recent initiative

- Your manager’s name and typical communication style

- Recent company news or internal terminology

- This capability of AI is the single biggest reason low-skill attackers can now run enterprise-grade campaigns. Attackers feed a simple prompt into an uncensored AI tool (WormGPT, FraudGPT, or variants built on Grok/Mixtral) along with a target list. The AI instantly scrapes or publicly available data from LinkedIn, company websites, recent press releases, or leaked credential dumps. In seconds it knows:

- Hyper-personalized phishing emails in seconds

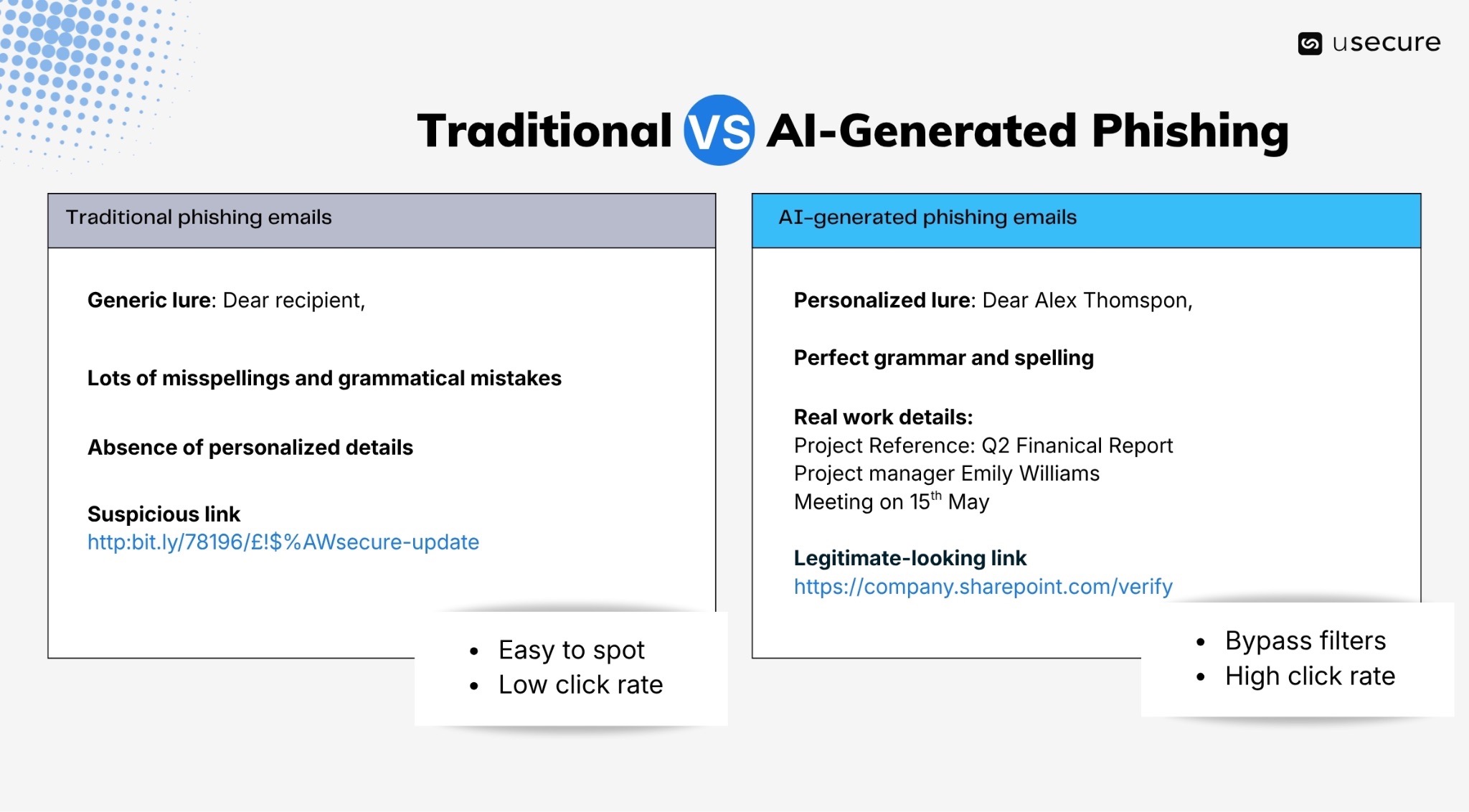

- The AI produces polymorphic emails, each one unique. It varies subject lines, greeting phrasing, sentence structure, tone, and even subtle formatting so that no two messages look identical. Traditional email security gateways rely on pattern matching, known malicious signatures, or statistical anomalies (odd grammar, repetitive phrasing, suspicious keywords). AI-generated emails contain none of those red flags:

- Perfect, native-level grammar and spelling

- Natural conversational flow that matches your organization’s internal style

- Contextual urgency tied to real events (“Following up on the Q4 budget review you mentioned in last week’s stand-up…”)

- Legitimate-looking links or attachments that initially pass reputation checks

- Delivery & exploitation tools that bypass email gateways

- Low-skill attackers now can rent AI tools on Telegram for as little as $30/month to create attacks:

- Uncensored LLMs trained specifically on phishing and BEC templates. They produce flawless Business Email Compromise messages that mimic executive tone.

- Full Phishing-as-a-Service (PhaaS) kits that automate mass mailing with built-in MFA bypass and real-time credential harvesting. They generate and send thousands of individually personalized emails that read and behave like real human correspondence.

- Low-skill attackers now can rent AI tools on Telegram for as little as $30/month to create attacks:

The Explosive Growth of AI-Powered Cybercrime

The statistics below paint a sobering picture: AI has transformed phishing and scams from niche, skill-intensive operations into high-volume, high-success weapons accessible to even the least sophisticated attackers. The result is a dramatic escalation in both the quantity and quality of attacks.

- 82.6% of all phishing emails now contain AI-generated elements, up dramatically from near zero a few years prior.

- AI scams surged 1,210% in 2025, far outpacing traditional fraud (195% growth), with projected global losses hitting $40 billion by 2027.

- AI-generated phishing emails achieve 54% click rates, versus just 12% for human-written ones.

- Credential phishing attempts increased 703% in the second half of 2024 alone, with the trend accelerating through 2025.

- There was a 14x surge in AI-generated phishing attacks in December 2025 that bypassed email filters and landed in inboxes.

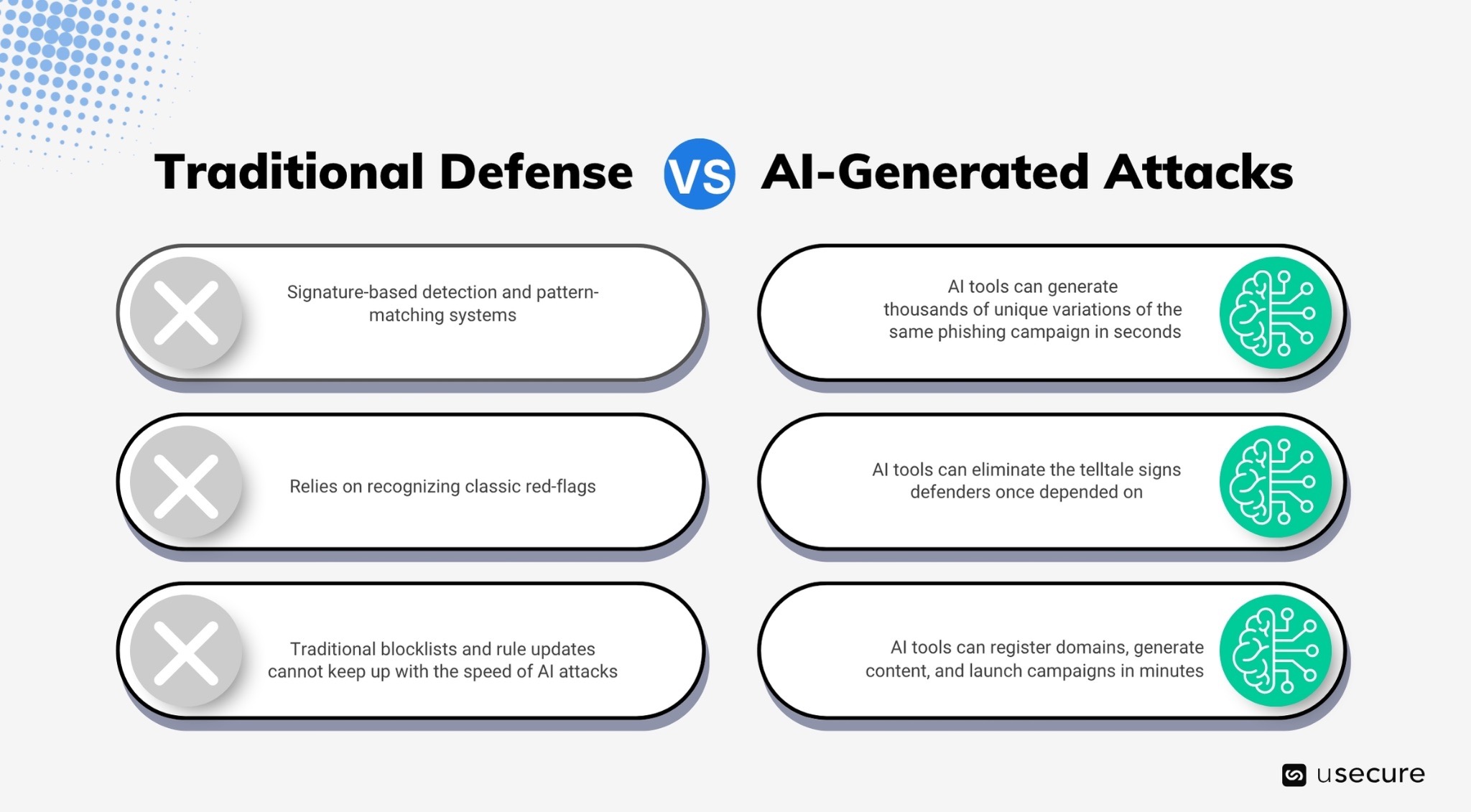

Why Traditional Filters Fail Against AI-Generated Attacks

Traditional email security tools, such as Secure Email Gateways (SEGs), signature-based filters, keyword blacklists, and basic machine learning models, were designed for an earlier era of cyber threats. They excelled at catching mass spam with obvious red flags: broken English, repetitive templates, suspicious domains, known malicious URLs, or malformed attachments. Today, those assumptions no longer hold.

- Polymorphism and Novelty: AI tools generate thousands of unique variations of the same phishing campaign in seconds. Each email differs in sentence structure, word choice, formatting, and subtle phrasing. Signature-based detection and pattern-matching systems rely on recognizing previously seen malicious indicators. When every message is new and never-before-seen, signatures become obsolete almost instantly. By the time security vendors update their databases, the campaign has already evolved or ended.

- Absence of Classic Red Flags: AI eliminates the telltale signs defenders once depended on. Studies show AI-generated phishing emails now bypass major providers at alarming rates. In controlled tests with GPT-4o-generated messages, Gmail and Outlook allowed many through, with one analysis highlighting pervasive detection failures across common filters.

- Speed and Scale Outpace Updates: Low-skill attackers use AI tools to register domains, generate content, and launch campaigns in minutes. Traditional blocklists and rule updates simply cannot keep up with zero-hour, polymorphic attacks. Reports indicate up to 50% (or higher in targeted scenarios) of sophisticated AI-driven phishing and BEC attempts now slip past legacy Secure Email Gateways.

AI Attacks in Action: 2025–2026 Case Studies

These examples from 2025–2026 illustrate how rapidly AI-powered tools have moved from underground experiments to mainstream cybercrime commodities.

- InboxPrime AI & new PhaaS kits: Launched in late 2025, InboxPrime AI automates personalized email generation inside phishing kits like BlackForce and GhostFrame. These kits (priced $200–$350 on Telegram) include MitB (Man-in-the-Browser) attacks that capture MFA codes in real time.

- Tycoon2FA disruption: Microsoft and partners took down one of the most popular AiTM (Adversary-in-the-Middle) phishing kits that had stolen thousands of Microsoft 365 credentials. Even after disruption, copycat kits proliferated.

- Deepfake & voice-cloning scams: An engineering firm lost $25 million when attackers used a deepfake video of the CFO in a video call. Fraudsters need only three seconds of audio to create voice clones with up to 85% accuracy. Deepfakes online grew 900% annually.

- AI malware like PromptLock and LameHug: Low-skill actors use tools like WormGPT and FraudGPT to generate polymorphic ransomware and self-mutating code. One report noted autonomous malware in 23% of payloads by end of 2025.

HRI: The Intelligence Layer That Stops AI Threats

Technical controls alone can no longer keep pace with the rapid evolution of AI-generated threats. Human Risk Intelligence (HRI) has become the essential new layer of defense. It combines awareness training, phishing simulations, identity hygiene, and access signals into a continuous, explainable, and actionable view of human-led risk. HRI delivers:

- AI-driven, role-based micro-learning that automatically enrolls users based on their exact knowledge gaps and recent phishing simulation failures.

- Phishing simulations that coach users the moment they slip, turning every “mistake” into immediate behavior change.

- Dark-web credential monitoring to swiftly detect exposed credentials and automated policy acknowledgments to strengthen compliance.

- Insightful dashboards that provide human risk scores for every user.

Protect the Human Layer with HRI

Low-skill threat actors are now launching enterprise-grade campaigns at scale, and every click, call, or shared credential represents a potential entry point into your organization. The solution is to stop treating people as the weakest link and start turning them into your strongest defense. HRI empowers organizations to do exactly that — delivering adaptive training, real-time coaching, and continuous risk visibility that evolves as quickly as the threats themselves.

Ready to turn human risk into human resilience? Explore the demo hub to see our security awareness solution and the wider human risk suite in action.

Subscribe to newsletter

Discover how professional services firms reduce human risk with usecure

See how IT teams in professional services use usecure to protect sensitive client data, maintain compliance, and safeguard reputation — without disrupting billable work.

Related posts

Explore more insights, updates, and resources from usecure.

.png)

.png)