Deepfakes, AI-generated synthetic media that convincingly mimic real people, are no longer a futuristic novelty.

Deepfakes have become a cornerstone of cyber threats, supercharging social engineering attacks with unprecedented speed and realism. Voice and video cloning allow attackers to impersonate executives, family members, or trusted figures in real-time, bypassing traditional security measures and exploiting human vulnerabilities.

That shift changes the defender’s job. Security isn’t only about blocking malicious links anymore, it’s about managing human risk in real time, because the human is often the final control.

This blog dives deep into the mechanics, examples, statistics, and risks of this escalation, while exploring the human risk intelligence as a defense against these threats.

Understanding The Technology Behind Deepfakes

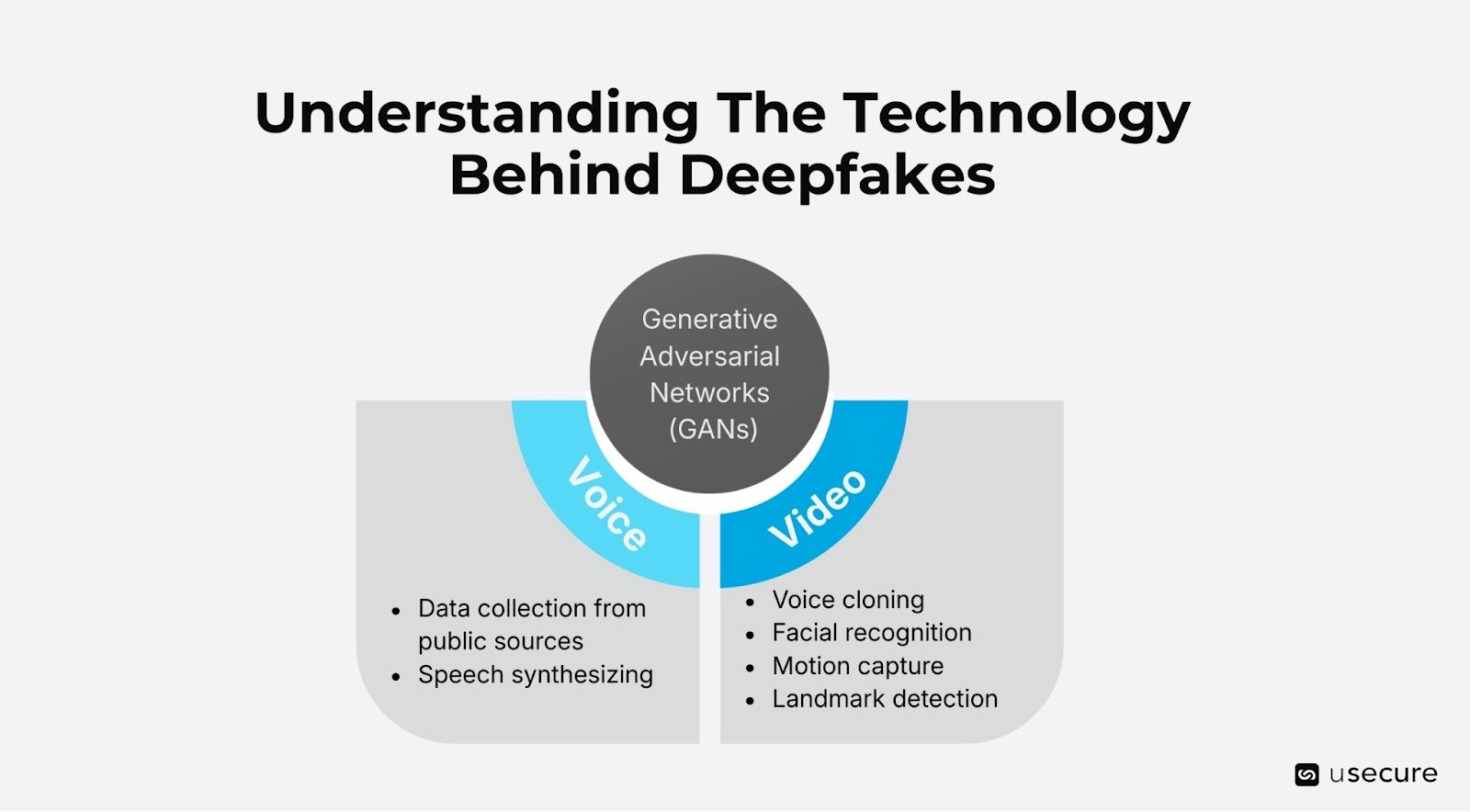

Deepfakes leverage advanced machine learning techniques, primarily generative adversarial networks (GANs), to create hyper-realistic audio and video content. In a GAN setup, two neural networks compete: one generates fake content, while the other critiques it until the output is indistinguishable from reality.

The Mechanics of Voice and Video Cloning

- Voice cloning

Voice cloning starts with data collection: attackers harvest audio from public sources like podcasts, voicemails, or even silent phone calls where victims unwittingly provide samples by saying "hello." Advanced AI models then synthesize speech that matches the target's vocal timbre, rhythm, and emotional inflections, speech patterns, intonation, and accents from just a few seconds of audio.

- Video cloning

Video cloning adds layers of complexity. It combines facial recognition with motion capture and landmark detection to map facial features and blend them seamlessly onto another body, scene, or to swap faces or generate entirely new expressions.

Deepfakes are not just about swapping celebrity faces for laughs nowadays; they have evolved into crafting believable interactions. The barrier to entry has plummeted, free online tools and deepfake-as-a-service platforms make it accessible to anyone with basic tech skills.

The speed of deepfake attacks is alarming. In 2026, real-time cloning during live calls is commonplace, thanks to low-latency AI frameworks. This enables "faster wins" in social engineering: traditional phishing might take days of back-and-forth emails, but a cloned video call can extract credentials or approvals in minutes.

Real-World Examples of Deepfake-Enabled Attacks

Deepfake-driven cybercrime extends well beyond everyone’s imagination and has the potential to disrupt every industry.

- Insurers, for example, are absorbing heavy losses as criminals use fabricated deepfake materials to support fraudulent claims.

- Rival businesses can also exploit this technology by producing fake customer reviews or manipulated videos and images that depict a competitor’s product as defective, harming brand reputation.

- One notorious example is the 2025 Singapore Zoom scam. Fraudsters used an advanced deepfake video during a Zoom conference call to impersonate the company's senior executives. The targeted employee, convinced by the realistic visuals, audio and the multi-person meeting format, authorized a fraudulent wire transfer of approximately USD 499,000 to attacker-controlled accounts. The scam was only discovered afterward through follow-up verification with legitimate channels.

- In 2024, a Hong Kong multinational also lost USD 25 million to a deepfake video conference where cloned executives authorized payments.

The broader economy and society are also impacted by deepfakes.

- In 2023, a fabricated video showing an explosion at the Pentagon once triggered stock market turmoil before authorities were able to debunk it. More advanced and coordinated deepfake attacks could result in substantial drops in company valuations and widespread financial instability across global markets.

- Even elections aren't safe. Deepfakes of politicians endorsing false narratives disrupted campaigns in multiple countries during 2024.

These examples show deepfakes enabling "faster wins" by compressing the attack timeline from reconnaissance to execution.

Latest Statistics: The Alarming Rise

The proliferation of deepfakes is backed by stark data. According to a 2025 Pindrop study, deepfake fraud could rise 162% that year, with contact center fraud reaching $44.5 billion. DeepStrike estimates online deepfakes surged from 500,000 in 2023 to 8 million in 2025, with annual growth accelerating.

In 2025, research found that 46% of deepfake attacks involved video, up from previous years, followed by images at 32%. 37% of fraud experts report encountering voice deepfakes and 29% video ones. A VikingCloud report notes that over a quarter of small-to-medium businesses experienced deepfake schemes in 2025.

Although producing a deepfake can cost as little as USD 1.33, projections estimate that deepfake-related fraud could reach USD 1 trillion globally in 2024.

Globally, deepfake-as-a-service exploded in 2025, driving AI identity fraud.

Human Risk Intelligence Angle: People as the Frontline

Combating deepfakes requires a multi-layered approach. Human Risk Intelligence (HRI) plays a central role by helping organizations understand, measure, and reduce human vulnerabilities.

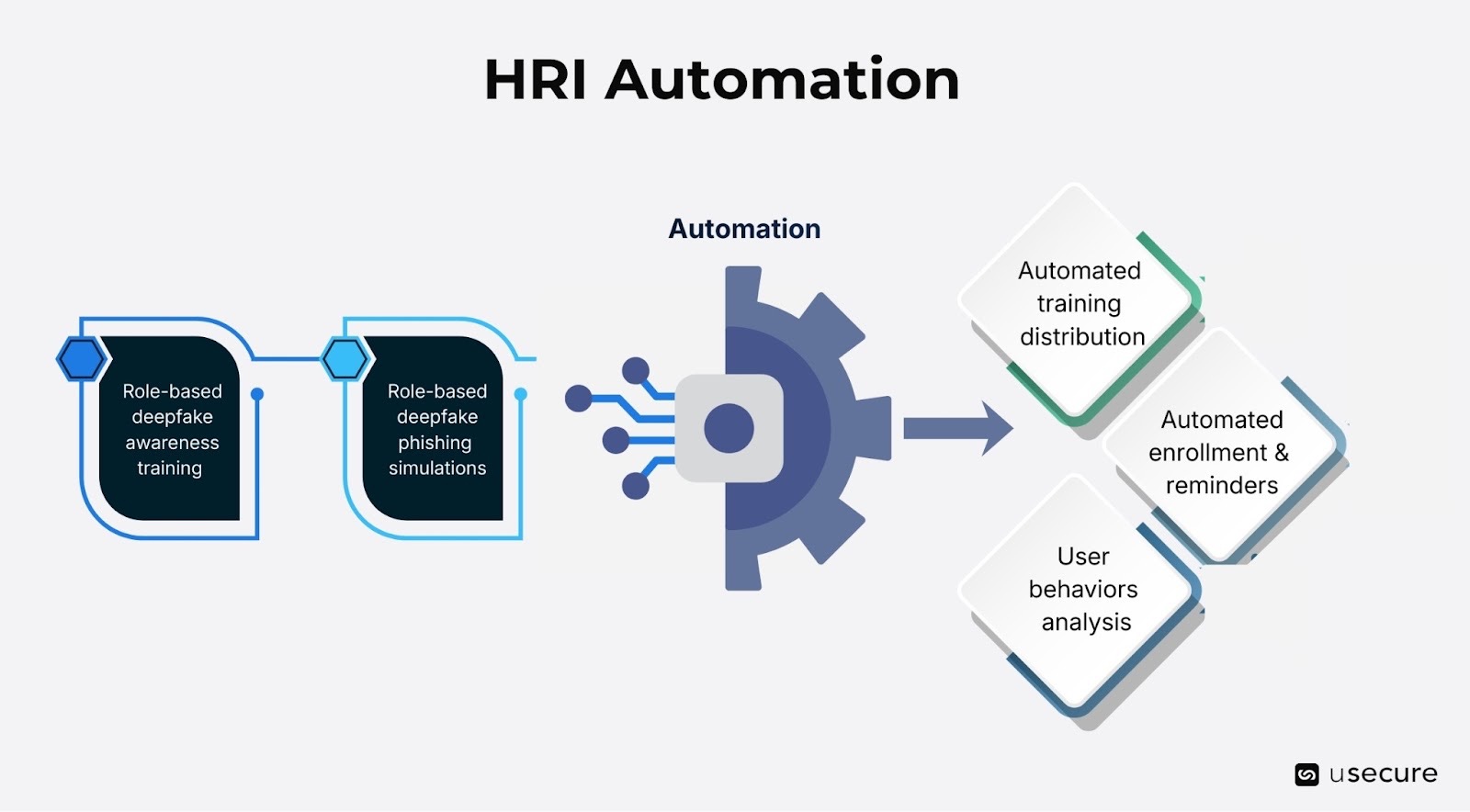

Role-based training is a critical component of an effective HRI strategy. Organizations should deliver regular deepfake awareness training, run simulated attacks (including voice and video cloning scenarios where appropriate), and continuously monitor behavioral risk.

The most effective platforms streamline this process through automation. Features such as personalized microlearning modules can address individual knowledge gaps with role-specific content. For example, executive-focused deepfake scenarios or business email compromise (BEC) simulations for finance teams. Automated enrollment and reminders help maintain a consistent training cadence while minimizing administrative overhead.

Advanced HRI platforms also gather and analyze data on user behaviors, such as awareness training and phishing simulation results, dark web exposures (such as credential leaks), and other risk factors to generate a predictive Human Risk Score for each individual and the organization overall. This provides real-time visibility into vulnerabilities and trend tracking ability to highlight persistent weak spots. By combining these signals, organizations can prioritize interventions to target high-risk users with personalized coaching and ongoing monitoring.

Navigating the Deepfake Frontier

Deepfakes represent an escalation in social engineering, where voice and video cloning deliver faster, more convincing wins for attackers. With statistics showing explosive growth and examples underscoring real dangers, the threat is clear. Yet, by embracing human risk intelligence, we can empower people to spot and resist these deceptions. In a world where seeing isn't believing, informed humans are our best defense. Stay vigilant, verify relentlessly, and remember: the next call might not be who it seems.

Explore the usecure demo hub to see usecure’s security awareness solution and the wider human risk suite in action.

Subscribe to newsletter

Discover how professional services firms reduce human risk with usecure

See how IT teams in professional services use usecure to protect sensitive client data, maintain compliance, and safeguard reputation — without disrupting billable work.

Related posts

Explore more insights, updates, and resources from usecure.

.png)

.png)