Artificial intelligence (AI) is reshaping every facet of digital interaction, phishing attacks have evolved from clumsy scams into sophisticated, hyper-personalised threats. This blog explores how AI enables attackers to craft lures at unprecedented scale, while highlighting human risk intelligence (HRI) as a critical control to mitigate these risks. Drawing on the latest data from 2025-2026, we'll dissect the transformation, tactics, defenses, and implications for organizations.

In this blog, we’ll cover:

- AI Has Changed Phishing Forever

- Inside AI-Powered Phishing: How Automation Is Redefining Social Engineering

- Understanding HRI and HRM

- How AI Amplifies Attacks

- The Psychology Behind AI-Powered Phishing

- Why Traditional Awareness Training Isn’t Enough Anymore

- AI vs AI: The Future of Phishing Defence

- 5 Ways to Protect Your Organization from AI-Powered Phishing

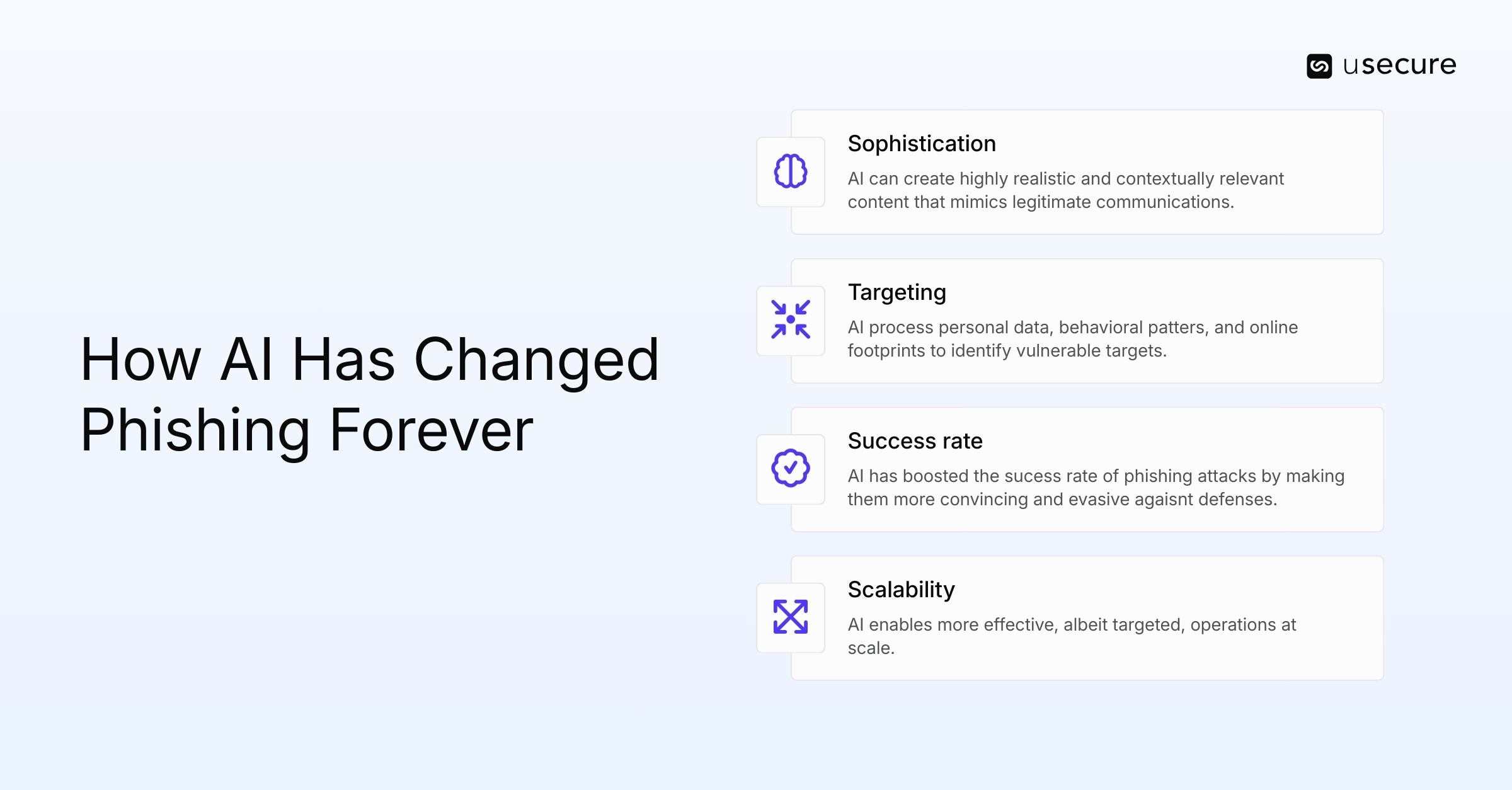

AI Has Changed Phishing Forever

Phishing emails used to be easy to spot. Bad grammar. Suspicious links. Generic greetings. AI has changed that. Traditional phishing relied on mass blasts with obvious red flags like broken English or mismatched branding, making them detectable by basic filters and vigilant users. In contrast, AI-powered phishing generates flawless, contextual messages that mimic legitimate communications seamlessly.

AI tools now write emails tailored to a recipient's role, company, and even recent news events, such as referencing a merger or internal project. This personalization happens at a massive scale, lowering costs for attackers while boosting success rates.

For instance, AI-driven phishing can produce campaigns 192 times faster than human efforts, with emails crafted in just five minutes versus 16 hours. The result? A 400% rise in successful phishing scams attributed to AI tools in 2025. This shift positions AI phishing as a thought leadership imperative, demanding proactive HRI to identify vulnerable behaviors and HRM to enforce adaptive controls.

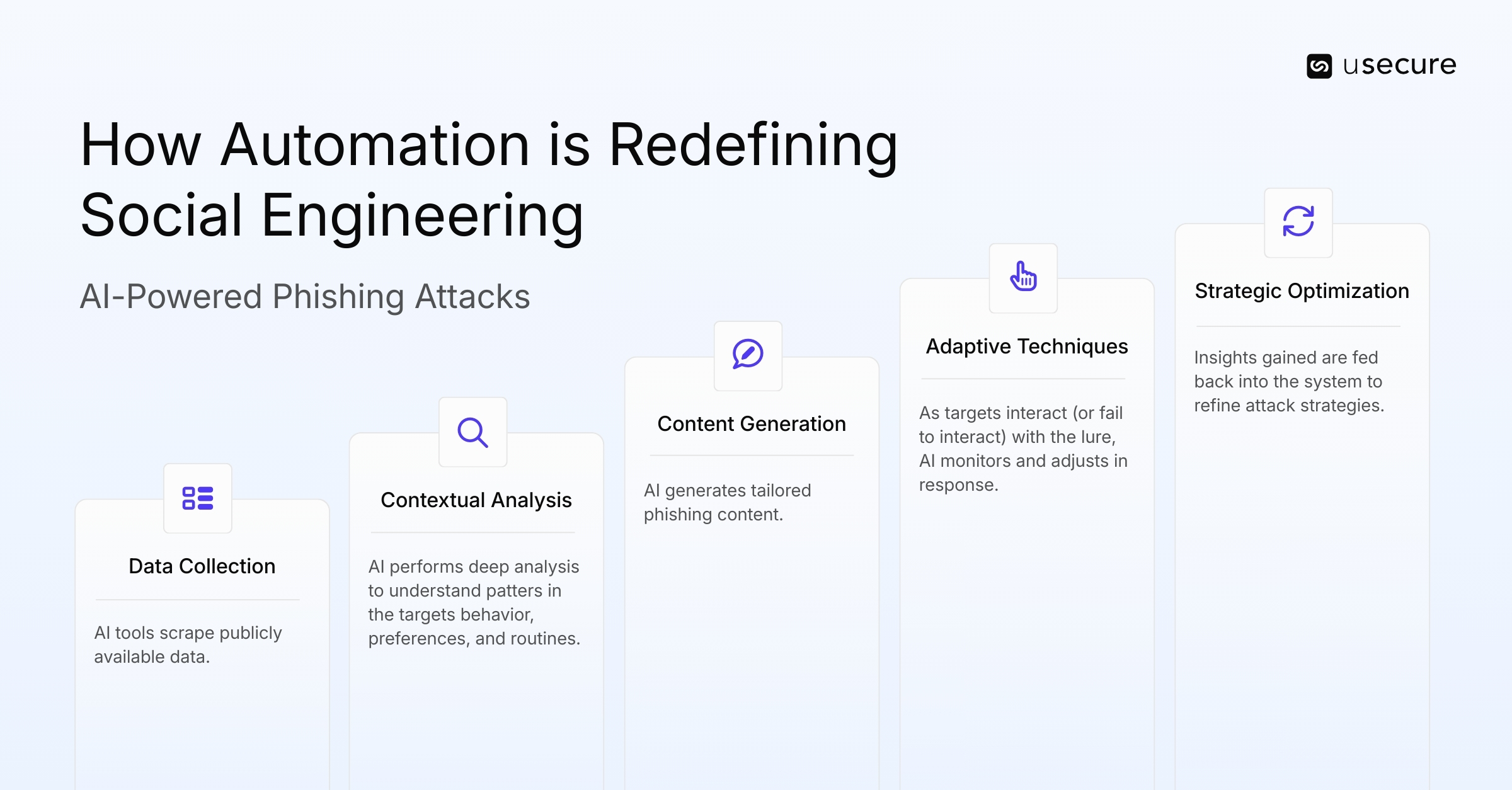

Inside AI-Powered Phishing: How Automation Is Redefining Social Engineering

Gone are the days of generic, error-ridden phishing emails. Today, large language models enable attackers to generate convincing, tailored messages using publicly available data. These AI tools remove the resource barriers that once limited spear phishing to select victims. Nowadays, attackers can produce hundreds of individualized lures in minutes, incorporating specific details like job titles, recent events, or even personal interests to increase credibility.

The process of AI-powered phishing and spear phishing often follows a structured, iterative cycle designed to continuously refine personalization and improve outcomes:

- Data Collection from Various Sources: This initial phase involves gathering vast amounts of personal and professional information about potential targets. AI tools scrape publicly available data from social media profiles, corporate websites, news articles, data breach dumps, and online forums.

- Contextual Analysis of User Behavior: Once data is collected, AI performs deep analysis to understand patterns in the target's behavior, preferences, and routines. Machine learning models might examine posting habits on social platforms, email response times, or interaction histories to infer psychological triggers.

- Dynamic Content Generation for Personalized Messages: AI generates tailored phishing content, such as emails, texts, or social media messages, in real-time. Large language models craft grammatically flawless, culturally attuned lures that incorporate specific details. This personalization can include forged attachments, links to malicious sites, or even deepfake elements.

- Adaptive Techniques Based on Responses: As targets interact (or fail to interact) with the lure, AI monitors and adjusts in response. For example, if a recipient opens but doesn't click a link, the system might send a follow-up with escalated urgency or alternative bait. Reinforcement learning algorithms analyze these interactions to refine delivery methods, timing, or even switch channels (e.g., from email to SMS), allowing the attack to evolve dynamically and evade detection by security filters.

- Performance evaluation and strategic optimization. In this phase, aggregated results are analyzed through A/B testing, cohort analysis, attribution modeling, and KPI tracking etc. Insights gained are fed back into the system to refine segmentation models, improve predictive accuracy, and enhance future attack strategies.

Understanding HRI and HRM

AI is amplifying social engineering by automating reconnaissance and generating hyper-targeted attacks. To combat these threats, organizations are turning to Human Risk Intelligence (HRI) and Human Risk Management (HRM). HRM is a holistic cybersecurity approach that identifies, quantifies, manages, and reduces risks stemming from human error. It goes beyond traditional awareness training by measuring behavioral risks and intervening proactively.

HRI, a key component of HRM, involves gathering and analyzing data on risk factors, including behaviors, permissions, and psychographics, to create a predictive Human Risk Index for each user. This enables personalized interventions, such as just-in-time training or phishing simulations tailored to an individual's risk profile.

How AI Amplifies Attacks

Attackers now effectively have a 24/7 phishing assistant at their disposal. Using AI, they can generate highly convincing spear phishing emails enriched with personal details scraped from LinkedIn profiles and company websites. They can deploy deepfake voice scams that clone executives using just seconds of recorded audio. AI also enables the creation of realistic messages that impersonate recruiters or trusted contacts, all at scale, and with unprecedented precision.

Chatbot-powered scam conversations maintain real-time engagement, adapting responses to evade suspicion. Automated tools also enable multi-channel attacks, combining email with SMS or video calls. In 2025, vishing attacks surged 442% in the second half of the year, fueled by AI voice cloning achieving 85% accuracy. This supercharging effect has led to a 1,265% increase in AI-driven phishing attacks since 2023, making traditional defenses obsolete.

- HRI angle: HRI strategies must integrate AI monitoring to detect anomalous interactions and flag high-risk scenarios.

The Psychology Behind AI-Powered Phishing

Hyper-personalisation increases trust by exploiting psychological biases like authority, urgency, and familiarity. AI crafts messages mimicking a boss's tone or referencing shared events, triggering fear-of-missing-out or compliance reflexes. Contextual manipulation, such as urgent invoice requests during financial quarters, amplifies these effects.

AI makes manipulation precise, with success rates 24% higher than elite human-crafted attacks by March 2025. This evergreen vulnerability stems from human tendencies: 95% of breaches involve human error, including falling for social engineering.

- HRI angle: HRI controls must address this by fostering a culture of skepticism, using HRI to profile and educate on individual biases, turning psychology from a weakness into a defense.

Why Traditional Awareness Training Isn’t Enough Anymore

Static training can’t keep up with the rapid evolution of AI phishing templates. Employees once trained to spot grammatical errors now face realistic simulations that exploit behavioral nuances. Phishing is evolving faster than annual refreshers, with AI enabling polymorphic attacks that change content dynamically.

In 2025, organizations reported a 17.3% increase in phishing emails, with 82.6% leveraging AI-generated content that evades secure email gateways. Traditional programs fail because they don't adapt continuously or focus on behavior-based learning. Adaptive training, tied to HRI insights is essential for simulating hyper-personalised lures and measuring real-time responses. Without it, success rates for AI phishing reach 54%, compared to 12% for non-AI attacks.

- HRI angle: HRI should shift from compliance checkboxes to ongoing, data-driven risk reduction.

AI vs AI: The Future of Phishing Defence

The only way to fight AI-powered phishing is with smarter AI-powered defence. As attackers use generative AI for hyper-personalised lures, defenders leverage AI for behavioral analysis, predictive risk scoring, and real-time threat intelligence. AI-driven detection systems can identify anomalies like unusual login patterns or contextual mismatches that humans might miss.

In 2025, organizations using AI extensively in security shortened breach response times by 80 days and saved $1.9 million per incident. However, the battle intensifies: 16% of breaches involved AI-generated phishing or deepfakes.

- HRI angle: Future defenses will pit AI against AI, with HRI providing the human layer, that means scoring employee risks and triggering automated interventions. This dual approach ensures scalability against attacks that have doubled in volume year-over-year due to AI.

5 Ways to Protect Your Organization from AI-Powered Phishing

AI has transformed phishing from clumsy, easy-to-spot scams into highly convincing, personalised attacks. To stay protected, organizations need to evolve their defence strategies just as quickly. Here are five practical ways to protect your organization from AI-powered phishing.

- Move Beyond Traditional Security Awareness Training

Annual refreshers aren't cutting it anymore. Shift to continuous, behaviour-focused training that prepares people for the real thing:

- Teach psychological red flags: urgency, authority pressure, emotional triggers, and subtle manipulation tactics AI exploits so well.

- Run regular simulations featuring AI-generated phishing (hyper-personalised, no grammar errors, contextual details pulled from public sources).

- Deliver bite-sized microlearning with instant feedback so lessons stick.

- Encourage employees to question context and intent, not just suspicious formatting or links.

- Use AI As Your Defense

If attackers are wielding AI, your defences should too. Modern email security platforms go far beyond keyword filters.

- Analyse behavioural patterns, sender reputation, and anomalies in communication style.

- Detect sophisticated impersonation (CEO fraud, trusted vendor spoofing) even when grammar is perfect.

- Flag unusual sending behaviours or signs of deepfake-style manipulation in attachments/content.

- Block threats before they reach inboxes using real-time AI models trained on evolving attack patterns.

- Enforce Multi-Factor Authentication (MFA) Everywhere

Credential theft remains a top entry point, but strong MFA stops attackers cold even if they succeed in phishing. Prioritise:

- Mandatory MFA across all critical systems and apps (not optional for high-risk roles).

- Phishing-resistant methods like hardware security keys (FIDO2), passkeys, or authenticator apps — avoid easily phishable SMS codes.

- Risk-based policies that prompt extra verification for unusual logins or actions.

- Strengthen Internal Verification Processes

AI makes impersonation terrifyingly convincing, especially in voice deepfakes or urgent executive requests. Build human safeguards:

- Require out-of-band verification (phone call, separate channel) for financial transfers, password resets, or sensitive data requests.

- Enforce clear, multi-step approval workflows for payments and high-value actions.

- Foster a “pause and verify” culture, train teams that it's okay (and expected) to double-check unusual requests, even from the boss.

- Build a Reporting-First Culture

Speed matters: the quicker a phishing attempt is flagged, the faster it can be contained and neutralised across the organization. Make reporting effortless and rewarding:

- Add prominent one-click “Report Phish” buttons in email clients and tools.

- Provide rapid, constructive feedback from security teams so reporters feel heard.

- Recognise and celebrate proactive reporting (e.g., shout-outs, small incentives) to normalise the behaviour.

AI Is Making Phishing Attacks Smarter

AI has helped phishing evolve and empowered attackers to exploit human errors at scale. The organizations that stay ahead aren't just reacting to threats, they're proactively reducing human risk at every layer. By prioritising Human Risk Intelligence (HRI), you gain real-time visibility into vulnerabilities, predictive scoring to focus efforts where they matter most, and data-driven insights that turn employees from the most exploited vector into your strongest, most adaptive line of defense.

Explore the usecure demo hub to see usecure’s security awareness solution and the wider human risk suite in action.

Subscribe to newsletter

Discover how professional services firms reduce human risk with usecure

See how IT teams in professional services use usecure to protect sensitive client data, maintain compliance, and safeguard reputation — without disrupting billable work.

Related posts

Explore more insights, updates, and resources from usecure.

.png)

.png)