It is a quiet Tuesday afternoon when the phone rings at the finance desk of a mid-sized engineering firm. The caller identifies himself as the company’s long-time vendor manager and provides all the correct account details, purchase order numbers, and references from previous transactions. He explains that due to a recent system upgrade, the company needs to update the payment details for an upcoming large invoice. The request sounds routine, the documentation appears legitimate, and the details check out in the system. The finance team processes the change without hesitation. Within days, multiple high-value payments totaling more than $25 million are redirected to accounts controlled by the fraudsters. Only later does the company discover that the “vendor” never existed. It was a sophisticated impersonation built on a fully fabricated synthetic identity.

This is not a hypothetical scenario pulled from a security conference slide deck. Similar incidents have played out in real organizations with alarming frequency, and the tactics are becoming increasingly refined. Synthetic identity fraud blended with high quality impersonation attacks has become one of the most costly and insidious threats facing support desks and finance operations teams today.

In this blog, we will explore the mechanics of these threats, examine recent real-life examples, review the latest statistics, and explain why implementing Human Risk Intelligence (HRI) can enhance your cyber defense.

What Exactly Is a Synthetic Identity?

Synthetic identity fraud involves blending stolen, fabricated, or manipulated data to create a seemingly legitimate persona. For example, fraudsters might pair a real Social Security number with a fake name, address, and employment history. They then nurture this identity over months by building credit profiles or engaging in low level transactions. Once the synthetic identity appears credible, it is used to secure loans, open accounts, or process large transfers before disappearing without a trace.

This method proves especially dangerous because there is often no immediate victim to report the crime. The identity exists only on paper and in databases until it is exploited. Generative artificial intelligence has supercharged this process. Generative AI tools now allow rapid creation of realistic documents, photos, and even video appearances that pass initial checks.

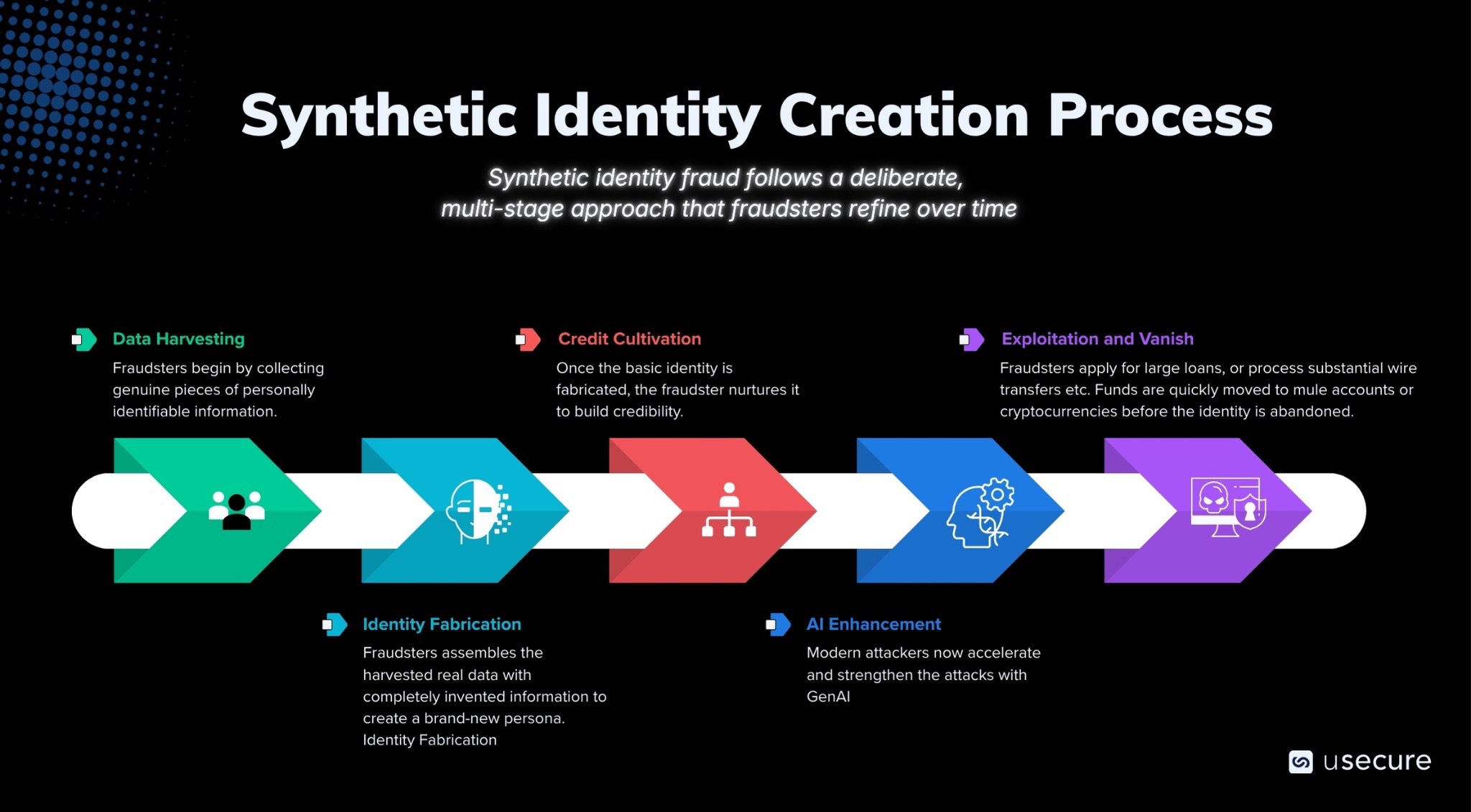

The Synthetic Identity Creation Process

Synthetic identity fraud follows a deliberate, multi-stage approach that fraudsters refine over time. Here is a clear, step-by-step breakdown of how it works.

- Step 1 Data Harvesting: Fraudsters begin by collecting genuine pieces of personally identifiable information. They source Social Security numbers, partial names, addresses, dates of birth, and other fragments from large-scale data breaches, dark web marketplaces, or even public records. This step is crucial because using real elements makes the eventual synthetic identity harder for basic checks to flag. Harvesting often happens quietly over weeks or months through automated scraping tools or purchased datasets. At this stage, the attacker has raw building blocks but nothing usable yet.

- Step 2 Identity Fabrication: Next, the fraudster assembles the harvested real data with completely invented information to create a brand-new persona. For example, they might pair a legitimate Social Security number belonging to a child or deceased individual with a fake name, address, phone number, and employment history. The goal is to construct a profile that has no real-world counterpart yet passes initial database lookups. This fabricated identity exists only in digital systems at first. Attackers use spreadsheets or custom scripts to test combinations until they find one that avoids immediate red flags. This blending of real and fake is what distinguishes synthetic identities from stolen ones.

- Step 3 Credit Cultivation: Once the basic identity is fabricated, the fraudster nurtures it to build credibility. They apply for credit cards, open bank accounts, or make small purchases and payments over an extended period, often six to eighteen months. This phase, sometimes called credit piggybacking or seasoning, creates a positive financial history that makes the identity look trustworthy. Small, consistent activity tricks credit bureaus and financial institutions into viewing the profile as legitimate. The fraudster may even file fake tax returns or utility bills to deepen the footprint. This patient cultivation is why synthetic fraud is so difficult to detect early; it mimics normal consumer behavior.

- Step 4 AI Enhancement: Modern attackers now accelerate and strengthen this process with generative artificial intelligence. Tools create realistic supporting documents, such as fake drivers licenses, passports, or bank statements, complete with watermarks and security features that fool visual inspections. AI can also generate deepfake photos, voice clones, or even short video clips of the synthetic person for video verification attempts. This step dramatically reduces the time and skill required, allowing fraud rings to scale operations rapidly. The AI layer makes the identity nearly indistinguishable from a real customer or vendor during support desk interactions or finance approvals.

- Step 5 Exploitation and Vanish: With a fully matured, AI-enhanced synthetic identity, the fraudster strikes. They apply for large loans, request high-limit credit increases, open business accounts, or process substantial wire transfers. Funds are quickly moved to mule accounts or cryptocurrencies before the identity is abandoned. Because the persona never existed in reality, there is often no actual victim to file a police report, which delays discovery. Losses can reach tens or hundreds of thousands per identity. The cycle then restarts with new harvested data.

The Rising Threat of Impersonation in Daily Operations

Fraudsters increasingly pose as executives, vendors, or customers to manipulate support desk agents and finance personnel. A single convincing call, email, or message requesting a password reset or direct deposit update can succeed when verification procedures fall short.

- These impersonation tactics frequently target routine financial processes. In U.S. universities, for instance, attackers have used spoofed communications to alter employee payroll direct deposits, forcing institutions to implement stricter manual verification protocols to prevent losses totaling hundreds of thousands of dollars.

- Finance operations face comparable risks when approving invoices or updating vendor payment details. By exploiting trust in internal communications, attackers can redirect legitimate funds to accounts they control.

- The threat becomes even more sophisticated when combined with deepfake technology. In one high-profile case, employees at a global engineering firm joined a video conference that appeared to include senior executives, including the chief financial officer. In reality, every participant except the victim was a deepfake. The team authorized transfers totaling $25 million before discovering the deception.

- Beyond impersonation alone, synthetic identity fraud amplifies the danger. Canadian authorities, for example, dismantled a major ring that had created over 680 fabricated identities since 2016, leading to approximately $4 million in confirmed damages across banking institutions.

Statistics That Reveal the True Scale of the Crisis

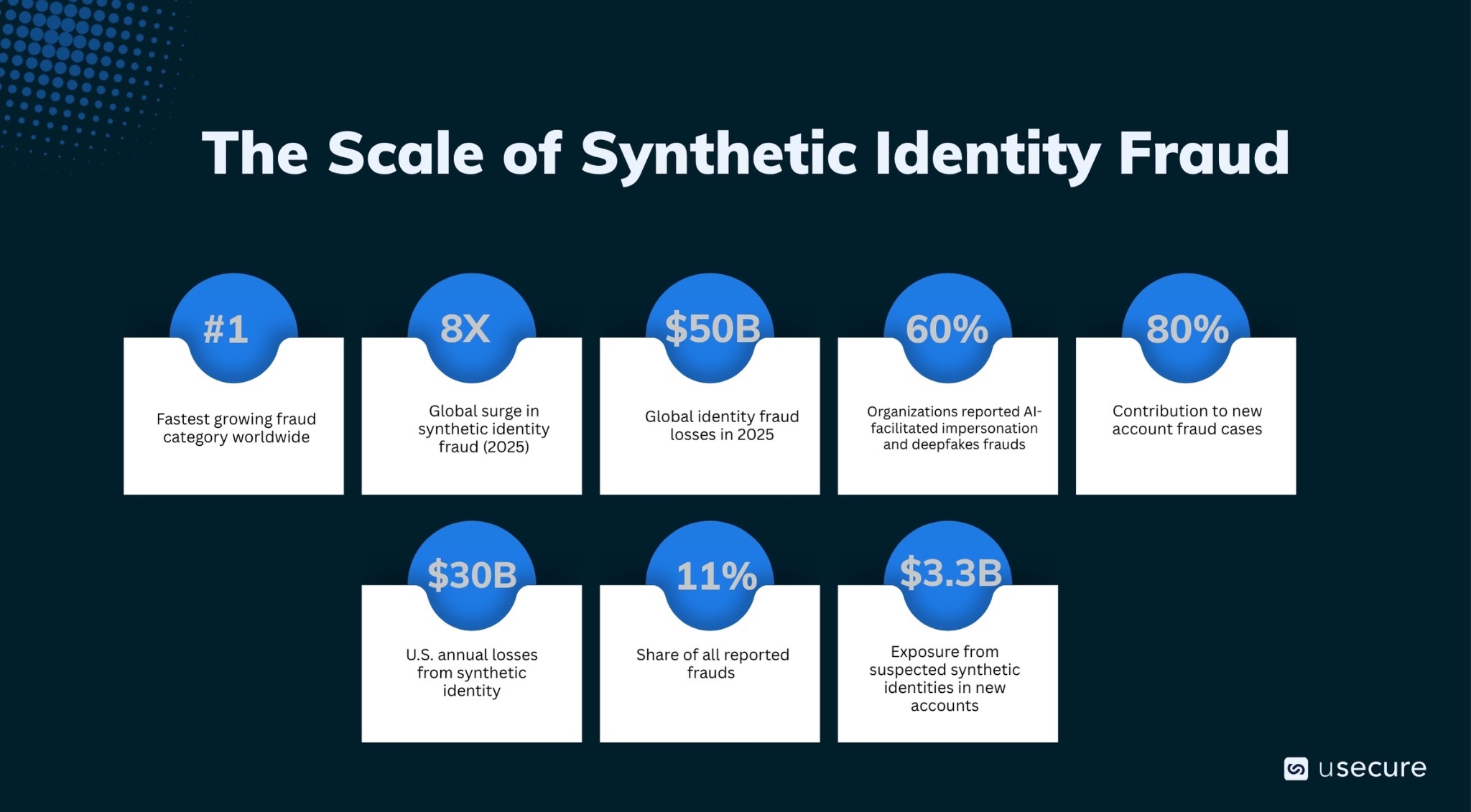

Recent statistics demonstrate how synthetic identity fraud and impersonation attacks have shifted from occasional threats into pervasive risks that routinely target support desks and finance operations.

- According to the latest LexisNexis Cybercrime Report analyzing over 116 billion transactions in 2025, synthetic identity fraud surged eight-fold globally and now represents 11% of all reported frauds. It ranks as the fastest growing fraud category worldwide.

- In the United States, the financial impact is particularly severe. Annual losses from synthetic identity schemes are estimated between $30 billion and $35 billion, while U.S. lenders already face over $3.3 billion in exposure from suspected synthetic identities in new accounts alone.

- These identities are now deeply embedded in the fraud landscape. They contribute to up to 80% of new account fraud cases in some assessments and represent 35% of all identity-related fraud losses. On average, schemes now span more than 90 days to evade detection, making them significantly harder to spot early.

- Account creation fraud remains especially high risk. Recent assessments show that roughly 8.3% of new digital account applications exhibit suspicious activity. Human factors play a central role here: Verizon’s research reveals that two-thirds of breaches involve human error or social engineering. Together, these findings underscore the urgent need for support desks and finance teams to adopt layered defenses that address both technological and behavioral risks.

- The situation continues to worsen. Nearly 60% of organizations reported increased fraud losses in 2025, with AI-facilitated impersonation and deepfakes cited as key drivers. Projections indicate AI-powered fraud losses in the US could reach $40 billion by 2027.

- Globally, losses from broader identity fraud exceeded $50 billion in 2025, with early 2026 indicators pointing to further escalation. Businesses alone lose an estimated $20 to $40 billion annually to synthetic identity fraud.

Integrating Human Risk Intelligence (HRI) into Your Cyber Defense

HRI shifts the focus from reactive training to continuous, data-driven insight that turns human behavior into a measurable layer of defense. Advanced platforms collect signals from security awareness activities, simulated phishing campaigns, dark web credential exposures, policy compliance tracking, and real-time user interactions. These inputs combine to generate predictive cybersecurity health scores and risk status for individual employees, teams, and entire departments. The scores highlight exactly who is most vulnerable to impersonation tactics or likely to approve suspicious requests without sufficient scrutiny.

By layering these insights directly into daily workflows, organizations move beyond guesswork. They gain the ability to spot subtle anomalies in request patterns that static systems overlook while proving measurable progress to leadership and auditors.

The Path Forward for Resilient Operations

The convergence of synthetic identity fraud and impersonation attacks demands immediate and sustained action from organizations of all sizes. Organizations should infuse HRI and build genuine resilience against these evolving threats. The time to strengthen your frontline defenses is now.

Ready to turn human risk into human resilience? Explore the demo hub to see our security awareness solution and the wider human risk suite in action.

Subscribe to newsletter

Discover how professional services firms reduce human risk with usecure

See how IT teams in professional services use usecure to protect sensitive client data, maintain compliance, and safeguard reputation — without disrupting billable work.

Related posts

Explore more insights, updates, and resources from usecure.

.png)

.png)