Generative AI tools like ChatGPT, Claude, and Copilot have become everyday workplace assistants. But for many organizations, this convenience comes with a hidden cost: sensitive company data quietly leaking into public AI models through simple copy-paste actions. Driven by human behavior and gaps in governance, the use of AI is creating a cyber exposure that traditional security tools can’t see.

In this blog, we'll explore the growing phenomenon of GenAI data leakage in depth. We'll break down what GenAI data leakage actually is, the most common types of sensitive data being exposed, the serious consequences organizations face, real-world examples of incidents, the human behaviors driving the risk, and most importantly, how Human Risk Intelligence (HRI) provides a modern, proactive defense to help organizations safely harness the power of AI without compromising security.

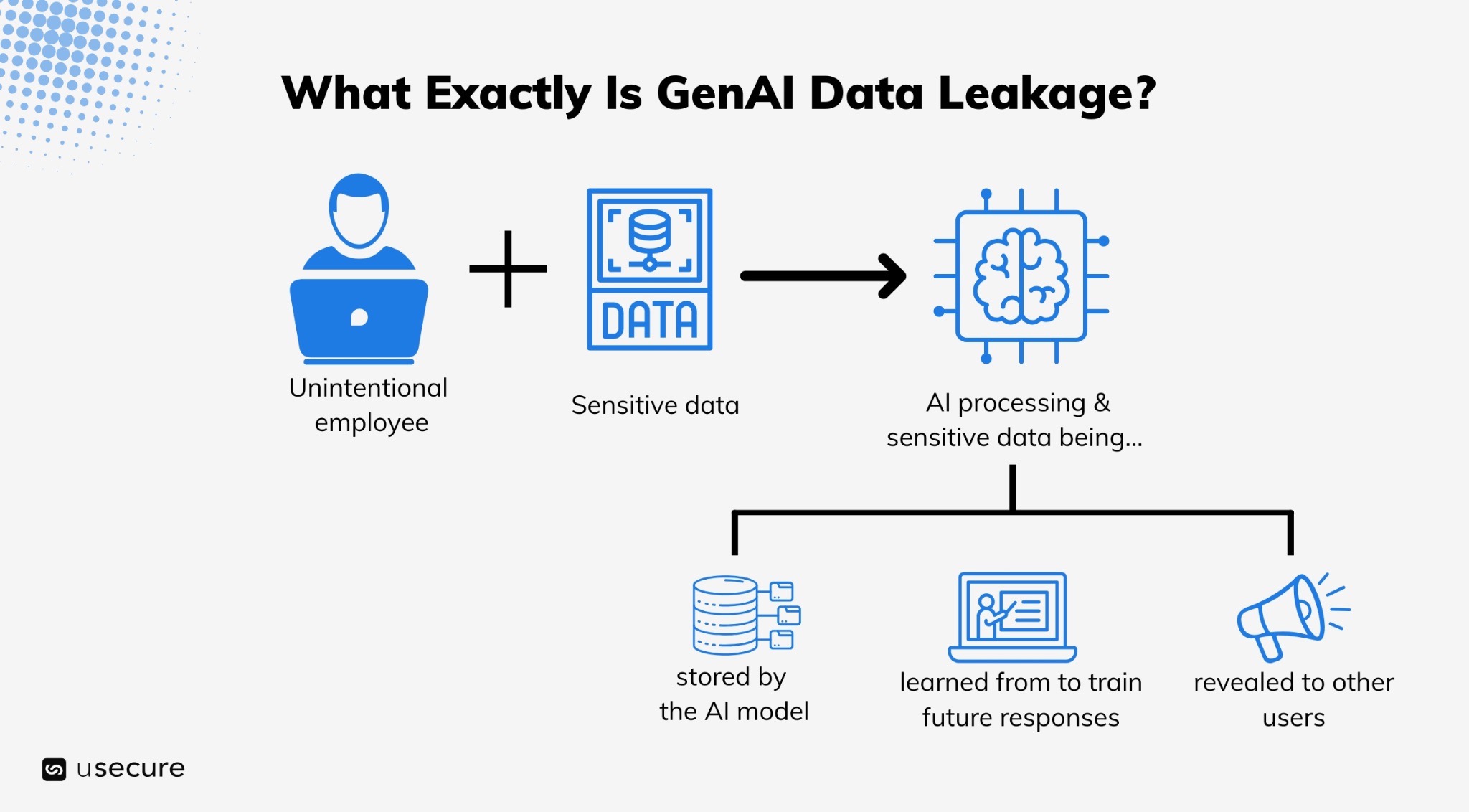

What Exactly Is GenAI Data Leakage?

GenAI data leakage refers to sensitive or confidential information being unintentionally exposed through generative AI systems, either during input, processing, or output.

It’s when data employees didn’t intend to share ends up being stored, learned from, or revealed by an AI model. Once submitted, the data doesn't vanish. Public models retain it unless explicitly blocked, and many free-tier accounts feed directly into training datasets. Even "private" enterprise versions carry risks if misconfigured.

Unlike traditional breaches involving sophisticated hackers, this leakage is usually unintentional. Employees aren't malicious; they're just trying to work faster.

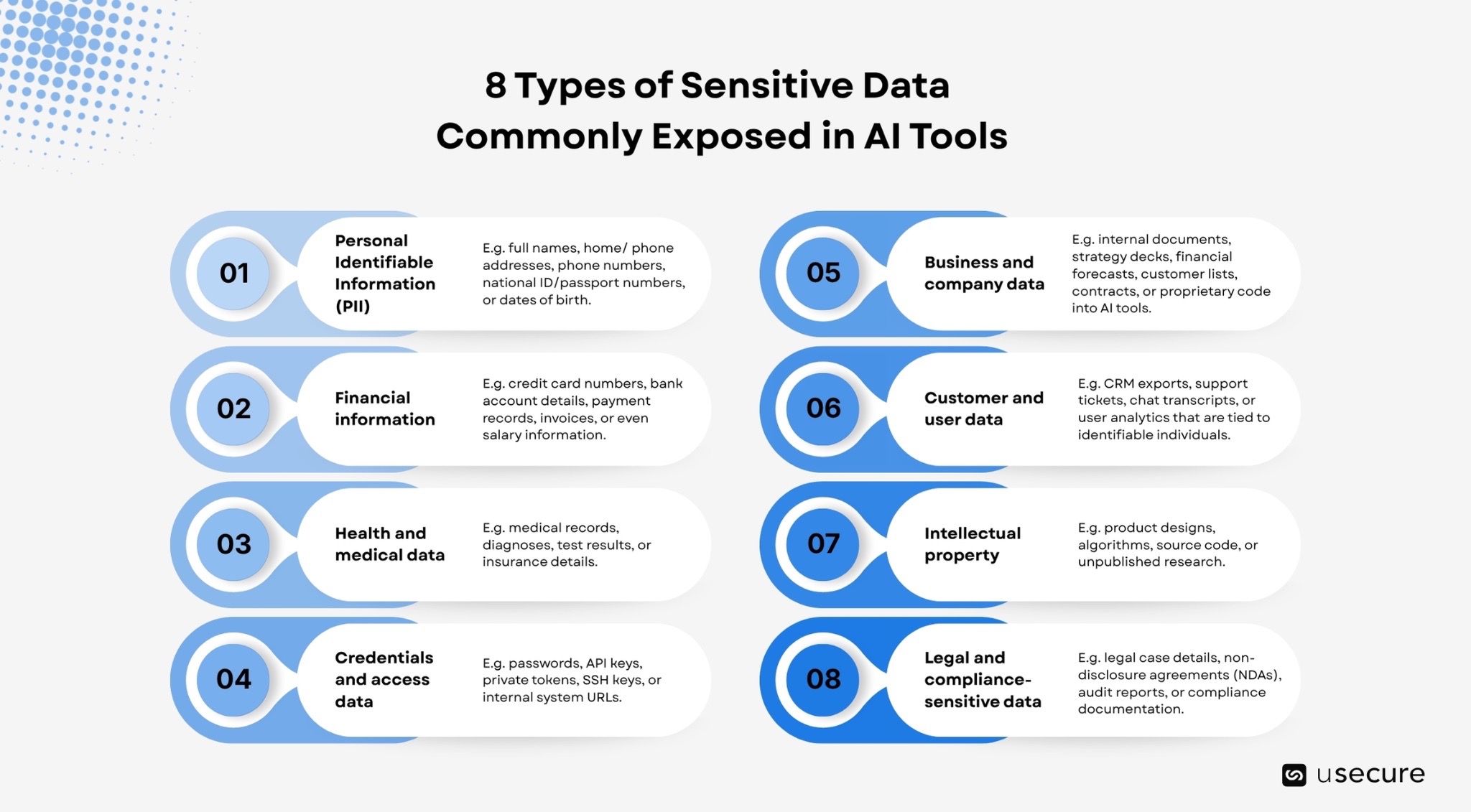

8 Types of Sensitive Data Commonly Exposed in AI Tools

Employees often underestimate just how much sensitive data they paste into AI tools. It’s not just passwords, there are several categories that commonly get exposed:

- Personal Identifiable Information (PII): This covers details such as full names, home addresses, phone numbers, email addresses, national ID or passport numbers, and dates of birth etc.

- Financial information: Employees sometimes paste credit card numbers, bank account details, payment records, invoices, or even salary information into AI tools for processing or analysis. This type of data is particularly valuable to attackers and can lead to serious consequences if exposed.

- Health and medical data:This can include medical records, diagnoses, test results, or insurance details. Such data is heavily regulated and requires strict handling under laws like GDPR or HIPAA.

- Credentials and access data: This includes passwords, API keys, private tokens, SSH keys, and internal system URLs that may contain login information.

- Business and company data: This includes internal documents, strategy decks, financial forecasts, customer lists, contracts, or proprietary code into AI tools.

- Customer and user data: This includes CRM exports, support tickets, chat transcripts, and user analytics that are tied to identifiable individuals. Sharing this data can violate privacy regulations and company policies.

- Intellectual property: This includes product designs, algorithms, source code, and unpublished research. Even if it doesn’t seem “sensitive” in the traditional sense, exposing intellectual property can harm a company’s competitive advantage.

- Legal and compliance-sensitive data: This includes legal case details, non-disclosure agreements (NDAs), audit reports, and compliance documentation.

The reason this kind of data gets pasted into AI tools is usually because people want help working faster. However, they often forget that the data itself is sensitive. A simple rule of thumb helps: if you wouldn’t feel comfortable posting the information publicly on the internet, it shouldn’t be entered into an unapproved AI tool.

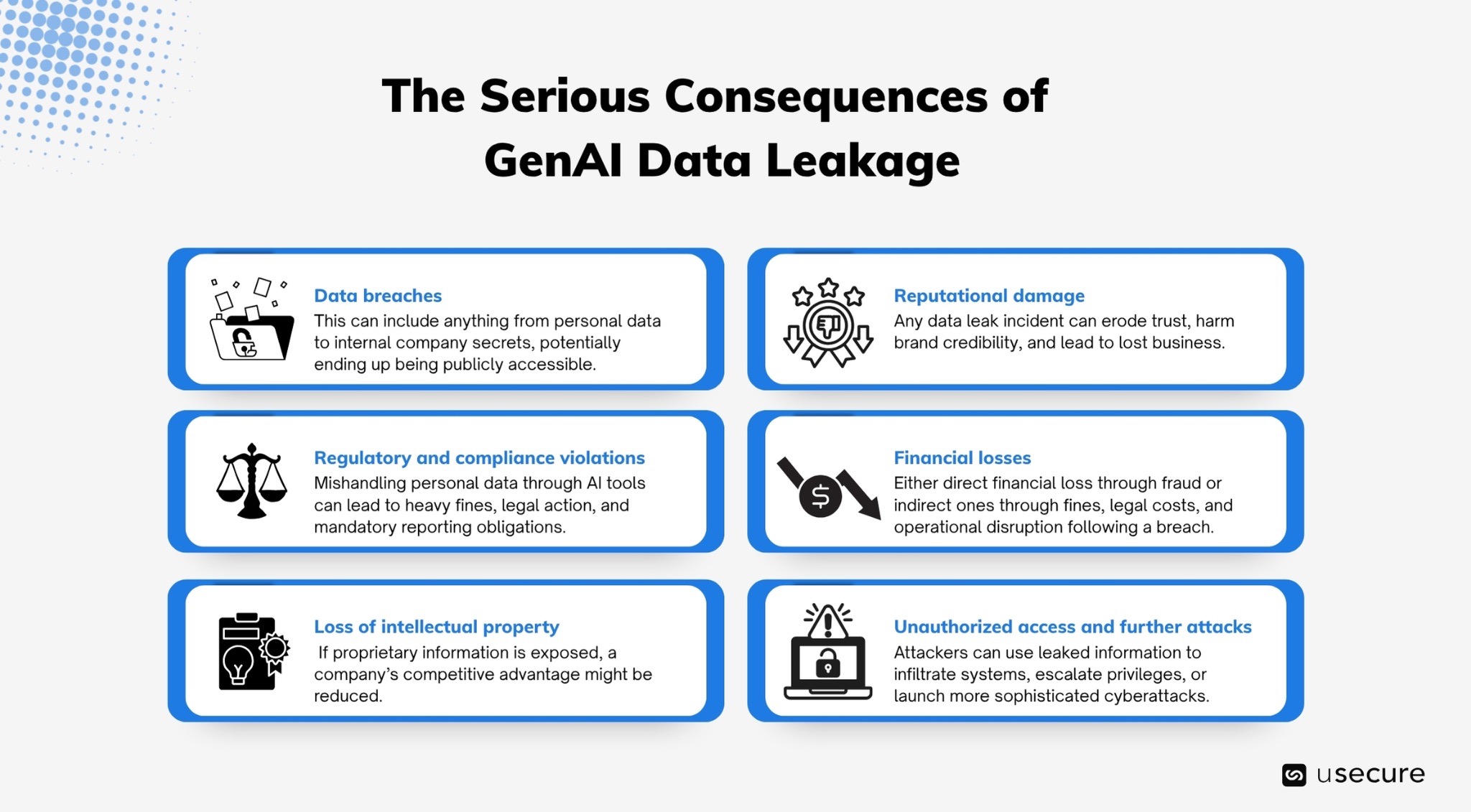

The Serious Consequences of GenAI Data Leakage

GenAI data leakage can lead to a wide range of serious and far-reaching consequences for individuals and organizations.

- Data breaches

One of the most immediate risks is data breaches, where sensitive information becomes exposed to unauthorised parties. This can include anything from personal data to internal company secrets, potentially ending up in the hands of attackers or being publicly accessible.

- Regulatory and compliance violations

It can also result in regulatory and compliance violations. Laws such as GDPR require strict protection of personal data, and mishandling it through AI tools can lead to heavy fines, legal action, and mandatory reporting obligations.

- Loss of intellectual property

Another major impact is the loss of intellectual property. If proprietary information like product designs, code, or business strategies is exposed, competitors could gain access to valuable insights, reducing a company’s competitive advantage.

- Reputational damage

GenAI leakage can also cause reputational damage. Customers and partners expect their data to be handled securely, and any incident can erode trust, harm brand credibility, and lead to lost business.

- Financial losses

In some cases, it may lead to financial losses, either directly through fraud or indirectly through fines, legal costs, and operational disruption following a breach.

- Unauthorized access and further attacks

Finally, there is the risk of unauthorised access and further attacks. If credentials or system information are leaked, attackers can use them to infiltrate systems, escalate privileges, or launch more sophisticated cyberattacks.

Gartner has projected that by 2030, 40% of organizations could face security breaches tied to AI, underscoring the need for proactive measures today.

The scale of the problem: Latest statistics paint a worrying picture

Recent 2026 research reveals just how widespread GenAI data leakage has become:

- 77% of employees paste data directly into GenAI tools, with more than 50% of those paste events containing corporate information. The average user performs 6.8 pastes per day, 3.8 of which include sensitive data.

- 26.4% of all file uploads to GenAI tools now contain sensitive data (up from 22% just three months earlier). 25% of this is technical data, and 65% of that is proprietary source code.

- 82% of these risky pastes happen via unmanaged personal accounts, bypassing enterprise controls entirely. GenAI has become the #1 channel for corporate data exfiltration, accounting for 32% of all unauthorized data movement.

The message is clear: AI usage is no longer an edge case, it’s the new normal.

Real-world examples: When “just asking AI for help” goes wrong

- Samsung: Engineers pasted confidential source code, meeting transcripts, and hardware specs into ChatGPT for debugging and optimization. The data entered OpenAI’s systems, prompting an immediate internal ban and policy overhaul.

- Law firms and healthcare industry: Associates and clinicians have inadvertently fed client-privileged documents and (even “de-identified”) patient data into public LLMs, triggering compliance reviews under GDPR, HIPAA, and ethical standards.

- Widespread enterprise incidents: Multiple organizations discovered proprietary algorithms, M&A documents, payroll data, and customer PII leaking via free-tier ChatGPT, Claude, and Perplexity accounts, often without the security team’s knowledge.

- Chat & Ask AI app breach: Exposed 300 million messages from 25 million users due to a misconfigured database, highlighting how AI wrappers amplify leakage risks.

The Human Element Driving the Risk

Human behavior lies at the heart of GenAI data leakage. Employees adopt AI tools rapidly because it delivers immediate value, yet many underestimate the privacy and compliance implications. Policies often lag behind tool adoption, allowing data leakage to occur unchecked as employees use AI tools without clear guidance, safeguards, or awareness of the risks.

Human Risk Intelligence: A Game-Changing Defense Against AI Data Leaks

Traditional training and governance fall short against GenAI use. Human Risk Intelligence (HRI) changes that. HRI combines behavioral data, threat intelligence, and AI-driven insights to predict and prevent human-driven risks. Organizations can reduce exposure through a layered strategy:

- Develop and communicate clear AI usage policies: Define what data can and cannot be shared, and provide approved enterprise-grade alternatives.

- Deliver targeted, behavior-focused training: Use insights from Human Risk Intelligence to tailor content around common pitfalls like pasting code or client data.

- Implement monitoring and guardrails: Deploy solutions that detect risky patterns in real time without overly disrupting workflows.

- Foster a culture of cyber hygiene: Encourage reporting of near-misses and encourage secure AI practices.

- Measure and iterate: Track key metrics such as paste incidents, risk scores, and training effectiveness to continuously improve controls, refine policies, and reduce the likelihood of future data leakage.

By integrating Human Risk Intelligence, companies can predict and prevent leaks rather than merely responding after the fact.

Governing the Use of AI with HRI

Generative AI offers tremendous potential for productivity and innovation, but unchecked employee use of public tools creates a significant vector for data leakage. The 2025-2026 statistics and examples make it clear: this is not a passing trend but a structural risk in modern workplaces.

The time to act is now. Assess your current exposure to AI tools, strengthen policies and training with behavioral insights, and build a culture where secure practices support innovation. In doing so, you turn a potential vulnerability into a competitive advantage.

Explore the demo hub to see our security awareness solution and the wider human risk suite in action.

Subscribe to newsletter

Discover how professional services firms reduce human risk with usecure

See how IT teams in professional services use usecure to protect sensitive client data, maintain compliance, and safeguard reputation — without disrupting billable work.

Related posts

Explore more insights, updates, and resources from usecure.

.png)

.png)